Posts Tagged ‘Windows 8’

Kinect, Unity 3D and Debugging

In this article, I was planning to make additional investigation of Kinect 2.0 SDK but I found a better theme – Debugging of Unity 3D applications using Visual Studio. Because I am not a professional developer in Unity 3D yet, I never thought about debugging of Unity 3D applications. But in the last article, I wrote about my first experience in Kinect and Unity integration and, frankly speaking, I had a headache during preparation of my demo. It happened due to the lack of ability to debug my demo scripts. Of course, Kinect 2 SDK was new for me, so I used many Debug.Log methods in order to understand what was happening with my variables.

I know that Mono Develop allows to debug Unity scripts but I don’t like this tool. So, I did not debug my scripts for some time and returned to this idea only two days ago. I was very surprised that there is an easy way to enable Debugging tools in Visual Studio. In order to do it you need to download and install Visual Studio Tools for Unity.

These tools were created by SyntaxTree team, which was bought by Microsoft in June-July this year. At once Microsoft announced availability of the tools for all developers for free and the first Microsoft version of them was presented in the end of July. The tools are integrated with Visual Studio 2010, 2012 and 2013 versions, so you can use them with older version of Visual Studio.

I have Visual Studio 2013, so I simply installed the package and enabled debugging in my project.

In order to enable debugging in an existing project you need to import package to it. If you install the tools successfully, you will find a new menu item there.

If you create a new project you will be able to add the package right in Create New Project dialog.

The package will add some scripts to your project, which will extend your standard menu. Additionally, the package contains some components that will support communication channel between Visual Studio and Unity.

In my case, I imported the package successfully, so I opened project in Visual Studio in order to start debugging. It was pretty simple, I just put some breakpoints there and clicked the F5 hot key in order to start debugging. Visual Studio switched to the Debug mode and I returned to Unity in order to start my application there. After that, I just clicked Start button in Unity and, finally, I was moved to Visual Studio in order to see a fired breakpoint.

You will be able to click and unclick Play (Pause) button as many time as you want. It will restart your game or just pause it but it will not affect Visual Studio which will stay in the Debug mode. If you want to exit the Debug mode in Visual Studio, you should just click Stop Debugging there.

To summarize, I spent a minute but I got a very powerful tool for my future Unity projects.

Kinect 2 and Unity 3D: My first experience

Several days ago, I got my first Kinect 2 for Windows devices in order to prepare my presentation for Developer Day event in Ukraine together with Dmytro Mindra. Right now, I already have some experience about Kinect and I am going to share it in my blog.

Before we create some code, we will need to download Kinect for Windows SDK 2.0. This SDK is in Public Preview now but it allows to create Windows Store applications (previous version didn’t allow it), so I was happy to install this version of SDK. I found it here: http://www.microsoft.com/en-us/download/details.aspx?id=43661.

Once SDK was installed I was able to review some examples and tools. I would recommend to use SDK Browser, that can be found in the following directory C:\Program Files\Microsoft SDKs\Kinect\v2.0-PublicPreview1409\Tools\SDKBrowser. This is a very useful tool, which will show you the entire SDK structure like tools, examples and link to docs.

I would recommend to use the Body Basic-XAML example in order to understand that your Kinect works fine or you may use Kinect Studio in order to do some experiments with the sensorJ Because my Kinect was working fine I could start to think about some demos.

Kinect is a very powerful device and you can use it in many ways as a sensor for your home robot or as a part of your security system but the most popular way of Kinect usage is for entertainment. It allows to create many good games and many of such games are already available for Xbox. At same time I don’t have any desire to create a game in C++/DirectX and I was trying to find a solution for Unity 3d because it is one of the best game engines and I like Unity 3d.

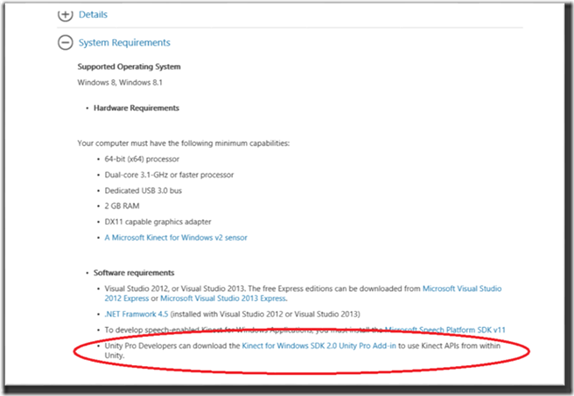

Some time ago, I heard information about an add-in for Unity 3d but I had to spend much time in order to find it. I used several search systems, then I called Unity 3d guys and finally I found it in a very unusual place – on the Kinect for Windows SDK 2.0 download page. This link is collapsed by default and you need to expand System Requirements section in order to find it.

Pay attention that this add-in will work with Unity PRO version only, because the free version of Unity doesn’t support add-ins. Probably, there is a way to fix it for Windows Store applications because Non-Pro version allows to use add-ins for this type of applications but I will check it later and for now I use a Pro version.

If you download the add-in successfully, you will be able to find the unitypackage file as well as two examples inside of it. We already have a small game, which allows us to kill “friendly green men” with our battle stellar ship and we decided to implement some Kinect ready activities to make killing process funnier.

Finally, we can implement three steps, which will allow us to use our body during the game in killing process.

On the first step, we found our MonoBehaviour that was responsible for movement of our ship and we added some new code to it:

private KinectSensor _Sensor;

private BodyFrameReader _Reader;

private Body[] _Data = null;

This code should be clear but I will describe it. In order to get access to sensor we needed KinectSensor class, which allows to initialize our Kinect and provides needed sources. In our case, we needed access to BodyFrame source, so we created BodyFrameReader variable that we used in order to read data from the source. Finally, we created Body array in order to store our data from the source.

On the next step, we needed to initialize Kinect and open our reader. That’s why we implemented the following code:

void Start()

{

_Sensor = KinectSensor.GetDefault();

if (_Sensor != null)

{

_Reader = _Sensor.BodyFrameSource.OpenReader();

if (!_Sensor.IsOpen)

{

_Sensor.Open();

}

}

}

We initialized the Kinect inside the very familiar method for Unity 3D developers and opened our reader there.

Finally, we realized a method, which was called from Update method of our Behaviour class. This method collected data from the sensor and calculated new position for the ship as well as fire ability:

horizontal = 0;

vertical = 0;

fireButton = false;

if (_Reader != null) {

var frame = _Reader.AcquireLatestFrame ();

if (frame != null) {

if (_Data == null) {

_Data = new Body[_Sensor.BodyFrameSource.BodyCount];

}

frame.GetAndRefreshBodyData (_Data);

int idx = -1;

for (var i = 0; i < 6; i++) {

if (_Data [i].LeanTrackingState !=

TrackingState.NotTracked) {

idx = i;

}

}

if (idx > -1) {

fireButton =

_Data [idx].Joints [JointType.HandLeft].Position.Y > 0;

horizontal = _Data [idx].Lean.X;

vertical = _Data [idx].Lean.Y;

}

}

frame.Dispose();

}

This code is more complex and Dima said that I should not show it because it’s not optimal but, probably, you will create the same code for your first Kinect application for Unity 3DJ

So, in this code, we used the Reader to grab current (latest) body frame that was needed in order to found bodies data inside. Kinect 2 supports up to 6 bodies, so _Sensor.BodyFrameSource.BodyCount should return 6. Using the latest frame we filled Body array in order to aggregate data for each body in our frame. At this point we finished work with Kinect sensor and tried to analyze bodies data. But, if you have just one ship (in our case we had two) and you don’t have any specific interface for Kinect initialization, you will not know about location of real body in the array. That’s why we were trying to find a specific index of the body of a pilot. Because we used leans as a main control mechanism for our ship we checked every body and we were trying to find LeanTrackingState status. If we find a tracked body we fixed index of this body and recalculated position of the ship as well as the fire ability.

I think that we spent about 30 minutes for this example and our presentation was very funny. If you know Russian, you can find our video there: http://devday.in.ua. And I just cut a short video from our presentation there are two guys (me and Dima) were doing a strange things on the stageJ

In the next article I am planning to integrate Kinect with simple Windows 8 application and will discuss different sources of data.

Azure Mobile Services: Notification Hub vs “native development”

Probably, if you are reading this article, you are a new reader of my blog as I have had many posts before but they were in Russian only. However, in nearest future I will be required to switch my language from Russian and Ukrainian to English. That’s why I decided to do it sooner than later and here is my first post in English!:) I will be happy to hear any feedback from your side, which will improve my English and help me to feel good in future.

“Native development” scenario

I started to learn Microsoft Azure since the first announcement during Build conference in 2008 but the first real experience I got in 2010 only. At that time, Microsoft started to push a stable version of the platform to clients and we got several requests from our partners about technical support. During that time, I worked with the biggest Ukrainian media holding and helped to deploy several applications for Windows Phone 7 and one of them was related to Microsoft Push Notification Service.

The client owned the biggest soccer portal in Ukraine and their technical staff wanted to implement a service, which could allow to notify all subscribers (primary WP and iOS) of the portal about some changes during live soccer games worldwide. I was not a pro in iOS applications, that is why I will describe the solution from Microsoft platform perspective but you can easily interpolate it to any other platform. So, it was not too hard to realize a simple Windows Phone application, which provided last news and allowed receiving Toast notifications but in case of server side, we expected several complex problems. The biggest problems were about hardware resources and Internet capacity. The portal has hundreds of thousands visitors during any kind of games and it was too hard to find some more servers as well as to guarantee quality of Internet channel to organize sending messages to Microsoft Push Notification Server to all subscribers during the same amount of time. That is why we decided to move server side of service to Azure.

In 2010 Microsoft already presented Cloud services (Web and Worker roles), Storage services (Tables, Blobs and Queue) and SQL Azure. We used them all. Let’s look a schema below, I will try to describe the solution in more details.

|

First, we implemented a web service (web role), which was able to store data from Windows Phone devices about Notification Channel URIs. The unique URI generates by Microsoft Push Notification service for the specific device and it could be used to send notifications to this device. So, if you have 100 devices, you should have 100 URIs to send message to each of them. In order to store information about URIs and users we used SQL Azure.

On the second step, we created a Web service (it was Web role but we can utilize the existent Web role from the previous step), which allowed to receive messages from an operator (a human on client side or something else). The messages contained some information about a game status, which should be send to subscribers. Of course, we could not use this Web service to deliver these messages to our subscribers, because it could take some time (up to several hours, in case of big number of subscribers) and we needed to send a response to the operator as soon as possible. That is why Web service stored new messages to Queue in Azure Storage. This structure is adopted to simultaneously access to messages inside and it works very well, if you use several instances of Worker role for processing of the messages.

On the last step, we created a Worker role, which was sending the messages to the subscribers. We were able to monitor the queue and increase or decrease number of instances of Worker role based on number of games, number of subscribers and number of messages in queue.

The proposed solution is stable and flexible but there are some disadvantages. From the first sight, the solution is too complex and you need to know several Azure features in order to realize it. From the second side, you should be aware of the price of separate services like Cloud, Storage, SQL Azure. These disadvantages could stop many developers from realizing of the simple and common solution like notification to clients applications. But it was just a background for “native development” on Azure. Since that time Microsoft have realized several good solutions in a box and one of them is Azure Mobile Services, which contains a Notification Hub component. Let’s look this component in details.

Introduction to Notification Hub

Notification Hub allows us to work without the knowledge of the details that are happening in the background. We are not aware about SQL Azure, Worker roles, Queues and client platform. We still can use Web role to manage authentication, to format messages etc.

|

In the simplest scenario, we just need the Notification Hub, which provide interfaces to sending messages via operator applications as well as to updating notification channel from client devices.

We just need to create a new Notification Hub service inside Azure account, configure it and the service will allow to subscribe unlimited number of devices as well as to send messages to them. Additionally, Notification Hub supports different types of client platforms like Windows platform, iOS, Android etc. In other words, Notification Hub is a black box, which allows us to realize client applications and “operator” applications only. Notification Hub is a ready to use common scenario, which was prepared by Microsoft.

Here are some features, which could be interesting for developers:

· Platform-agnostic – a developer can configure Notification Hub to send notifications to the most client on the market, like Windows 8, Windows Phone, iOS, Android, Kindle etc. At the same time there is API, which supports these platforms in order to make registrations of any type of devices;

· Tag support – a developer can send a message to subset of all subscribers using tags. For example, user can subscribe to news related to local soccer’s games only;

· Template support – you can personalize messages and localize them using templates. For example, the operator can send just one message with localized parameters and the Notification Hub will resend it based on different templates for different devices. Some of these devices will receive a message with text in Ukrainian but some of them will get message in English;

· Device registration management – you are able to integrate code to registration pipeline in order to preauthorize clients before registration. It is very important in case of paid services;

· Scheduled notifications – yes, you can schedule some notifications for specific time;

Therefore, you should be able to understand purpose of the Notification Hub. I am planning to show some features of the Notification Hub in next article.